The Cultural Architecture of Answer Extraction

I've been observing how B2B organizations talk about Answer Engine Optimization, and beneath the technical terminology, something more fundamental is shifting.

This isn't just a new SEO tactic.

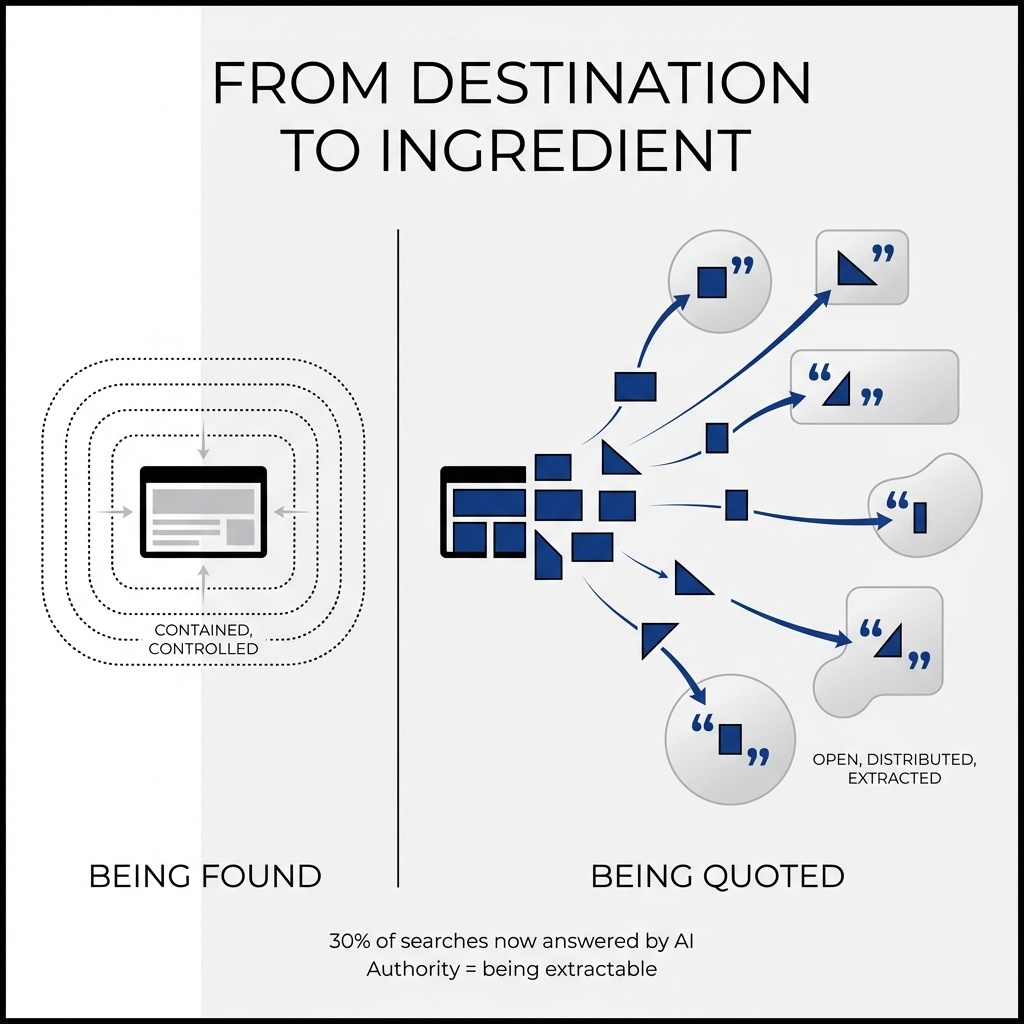

It's evidence of a worldview transformation in how organizations construct authority—moving from the ritual of "being found" to the doctrine of "being quoted."

The Binary Opposition: Discoverability vs. Extractability

The linguistic distinction between these two concepts reveals shifting power structures in information architecture.

Traditional SEO operated on a cosmology of ownership: rank your pages, control the SERP, drive traffic to properties you control. The mental model was territorial, dominate page one, capture the click, own the journey.

AEO introduces a different belief system entirely: becoming the ingredient rather than the destination.

When B2B teams implement FAQ schema, HowTo markup, and Organization structured data, they're performing a ritual of presenting themselves to machine judgment. Only 12.4% of websites use Schema.org markup at all—this low adoption exposes the symbolic nature of the practice, separating those who "speak the machine language" from those who remain algorithmically illegible.

The data confirms the scale of this shift: ChatGPT reached 800 million weekly active users by October 2025, while Google AI Overviews exploded from appearing in 6.49% of queries in January 2025 to over 30% by late 2025.

First impressions now happen inside AI summaries, not on homepages.

The Ritual Performance of Structured Data

Structured data markup functions as confession in canonical form—organizations standing up in the algorithmic town hall and declaring: "This is who we are, what we do, and how our knowledge is organized."

I observe this ritual exposing several cultural functions:

Naming themselves to the machine. Organization and Person schema represent a formal request to be recognized as distinct, referenceable entities rather than undifferentiated text in the knowledge graph.

Performing compliance and competence. Proper markup signals operational discipline and technical literacy, which themselves function as authority signals. Teams treat validation and adherence to schema.org guidelines as an ongoing governance ritual, not a one-off implementation.

Seeking elevation into privileged surfaces. Schema operates as a petition: "Please promote us from a generic blue link to a featured position." Teams measure success by being quoted in AI answers, appearing in panels, and becoming a default reference.

This reveals a cosmology where authority is something conferred upward by systems that curate and rank, rather than primarily by relationships with human buyers.

The Linguistic Shift: From Persuasion to Extraction

The language in FAQ and HowTo content sacrifices flourish, surprise, and voice in exchange for being safely quotable by machines.

I observe specific patterns:

Direct question-answer pairing replaces creative hooks. Instead of "Reimagine your RevOps engine," teams write "What is a RevOps strategy?" followed by one-sentence definitions that can stand alone out of context.

Front-loaded answers replace rhetorical build-up. FAQ answers lead with the gist in 1-2 sentences because LLMs preferentially extract opening summaries. Traditional marketing builds tension; FAQ language suppresses it for immediate, clippable resolution.

Modular building blocks replace flowing prose. Content breaks into numbered steps with imperative verbs because lists parse cleanly into structured outputs.

The catechism for machines is recognizable by what it eliminates: the very qualities that make content memorable and persuasive to humans are stripped away to serve algorithmic extraction.

Research confirms this tension: ChatGPT mentions brands 3.2 times more often than it cites them, and commercial intent queries drive 4-8 times more mentions than purely informational ones.

Being mentioned in the answer itself holds more value than being cited in the footnotes—a fundamental inversion of traditional attribution hierarchies.

The Measurement Paradox: New Status Hierarchies

When B2B organizations start tracking AI overview impressions, snippet visibility, and branded citations, the internal scoreboard changes.

Power tilts toward people who can design, instrument, and interpret "system-facing" authority.

Search and content strategists who understand answer engine optimization suddenly own the metrics that explain why brands appear (or don't) in AI overviews. They become translators between vague "visibility" and concrete numbers executives can rally around.

Analytics and RevOps teams who can connect AI visibility metrics to pipeline and revenue gain disproportionate influence. As zero-click scenarios grow, executives rely on these people to justify spend without the old comfort of last-click attribution.

Brand leaders with a systems lens get a seat at the performance table by framing AI visibility as category-shaping influence rather than another SEO vanity metric.

Meanwhile, channel-only performance marketers see their dashboards de-centered. Classic SEO practitioners anchored to rank reports lose status to those discussing citation share and authority weight.

These new metrics don't just add another column to reports—they redraw the org chart in miniature.

Authority accrues to whoever can consistently make the brand the system's first-choice explainer and prove that invisible influence compounds into visible revenue.

The Unresolved Contradiction

The core tension I observe: AEO tells brands to chase visibility in systems they don't control, while simultaneously demanding a depth and integrity of knowledge practice that those same systems don't reliably reward or even handle correctly.

Organizations are pushed toward ultra-concise, extraction-ready answers, even though this simplification can hollow out the very expertise that's supposed to justify authority.

You end up with an official doctrine that says "be the most trusted expert" and an operational doctrine that says "compress, flatten, and standardize your thinking so machines can skim it."

The industry hasn't resolved how much nuance it's willing to sacrifice.

AEO assumes answer engines will reward accuracy and reliability, yet the underlying systems hallucinate, misattribute, and confidently spread false information—with reputational and legal risk landing on the brand, not the model.

Organizations invest heavily in training and feeding arbiters whose judgments are opaque and sometimes wrong, while bearing full responsibility when those arbiters misrepresent their knowledge.

This asymmetry remains unaddressed in most AEO playbooks.

The rise of AEO exposes a deep shift: B2B organizations are no longer the sole narrators of what they "know." They are participants in an algorithmically mediated knowledge economy where being right, being trusted, and being understandable to machines collapse into the same requirement.

Authority is something you earn, demonstrate, and maintain in public, not something brand guidelines can simply declare.

Comments

Post a Comment