The Decision Framework That Turns Everyday Marketing Actions into Measurable Growth

In early 2026, we're watching something fundamental shift in how B2B organizations get discovered and chosen.

AI platforms are moving from surfacing answers to executing decisions.

Gartner predicts that 15% of day-to-day work decisions will be made autonomously through agentic AI by 2028. IBM and Salesforce estimate over one billion AI agents will be in operation worldwide by the end of 2026.

This creates an unprecedented window for B2B organizations to build the trust infrastructure that makes them the default choice in AI-driven buying journeys.

From Recommendation to Execution

Until now, most teams thought of AI as a copilot. It generated content or suggestions, and a human clicked approve.

That's changing fast.

Agentic systems are being wired directly into CRMs, finance tools, cloud infrastructure, and customer channels so they can execute end-to-end workflows with minimal human touch. These agents open tickets, resolve incidents, adjust budgets, and manage campaigns continuously within predefined guardrails.

Once software can execute decisions at scale, who or what has authority becomes an operational risk surface.

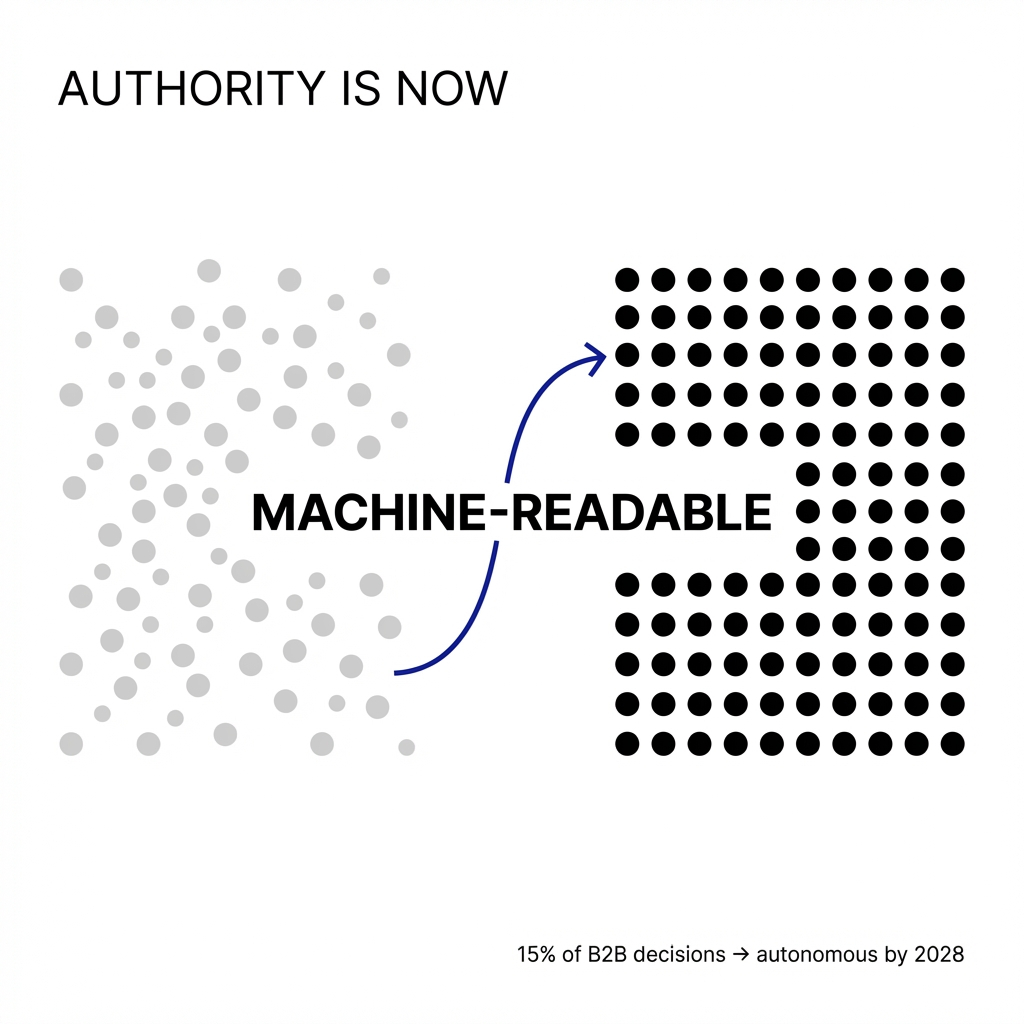

Authority now has to be designed for machines.

How Machines Infer Authority

AI systems don't believe your homepage.

They triangulate you across the ecosystem: review sites, communities, PR, and third-party content now weigh heavily as authority signals. They operate at the level of entities and semantic chunks. Consistent coverage of a topic, expert authorship, and cross-site alignment of facts all raise your trust weight in retrieval and AI search systems.

The game shifts from "am I present in the index?" to "am I the obvious, low-risk answer to this question in a machine's ranking logic?"

Visibility is about getting retrieved. Authority is about getting selected and cited.

What Breaks When Your Authority Isn't Verifiable

When agents start making and executing B2B decisions, a weak or non-verifiable authority footprint doesn't just cost you visibility. It causes agents to actively route around you as a risk.

What breaks is the trust chain the agent needs to justify a decision. You get screened out long before a human would have given you a shot.

Your pipeline model breaks quietly. Forecast intent signals no longer translate to opportunities because buyer-side agents are filtering you out before an SDR or AE ever sees the account.

Your brand becomes non-resolvable. If your brand and products aren't clearly resolved as stable entities across PR, directories, review sites, and analyst content, agents can't reliably map your solution to their need. The agent defaults to better-mapped competitors.

You trigger governance alarms. Enterprise-grade agentic systems are being built with explicit governance hooks. If you're under-represented in trusted industry publications or vetted partner ecosystems, an agent flags you as higher compliance risk.

In agentic commerce, algorithms determine which suppliers appear in a company's workflow, at what price, and under what terms. Kearney research shows six in 10 U.S. shoppers expect to use AI shopping agents within the next year, with similar patterns emerging in B2B channels.

The Infrastructure Most Organizations Skip

Most B2B marketing infrastructure is wired around source, medium, and campaign attribution. It's built for channels and last-touch metrics.

When you try to build a cross-surface authority graph, your whole reporting and planning logic starts to fall apart.

Your data model is wrong for the job.

You can't answer basic questions like "where does this product get mentioned, by whom, and with what sentiment?" because your systems don't track authority signals as first-class data.

Today you have a blog owner, a PR agency, a social team, maybe a partner marketer. Each optimizes their own lane with their own KPIs. Nobody owns "are we resolvable and trusted as an entity in AI systems across all of these surfaces?"

The moment you ask for a unified authority footprint, there is no owner, no system of record, and no shared success metric.

The 90-Day Proof That Overcomes Resistance

The quickest way to prove centralized authority infrastructure works is a tightly scoped 90-day pilot.

You treat one revenue-critical slice of your business as the "authority product." Then you show leadership a concrete lift in AI share-of-voice and downstream opportunity creation for that slice.

Pick one product and one ICP. For example, mid-market FP&A teams buying planning software. You're building an authority graph for one high-value lane where you can see movement quickly.

Define 10-20 real AI prompts that map to that lane and baseline how often you are named, cited, or linked in answers across ChatGPT, Perplexity, and other AI search surfaces.

Ship one backlog. The central team owns reviews on the right platforms, 2-3 bylined expert pieces in credible media, refreshed decision-stage content, structured data, and aligned messaging across site, profiles, and partner listings for that product and ICP only.

Within 60-90 days, you should see a measurable increase in AI search share-of-voice on your chosen prompts and the number of distinct AI answers that now mention or cite you.

Early adopters implementing focused AI share-of-voice strategies are seeing 15-25% gains in AI citation rates within 60-90 days on targeted product lanes.

The Entity Layer That Makes It Scale

The piece most teams underestimate when scaling from one lane to ten is the shared, reusable entity layer.

A single, governed model of "who and what we are" that every product, region, and channel has to plug into.

When you go from one lane to ten without that, the model breaks. You end up with ten slightly different versions of the company, products, and experts. AI systems see fragmentation instead of compounding authority.

In the first pilot, everyone can coordinate manually. At portfolio scale, without a proper entity schema and governance, teams start improvising names, tags, and narratives per product or region.

That improvisation leaks into PR, blogs, partner listings, and review sites. SKUs described differently. Features renamed. Founders and experts introduced inconsistently. Numbers and claims slightly off between the blog, the wire, and the deck.

That inconsistency kills you in machine judgment.

Stand up an entity registry and schema. One authoritative catalog of company, products, bundles, use cases, segments, and experts, with canonical names, descriptions, key claims, and IDs that everything else must reference.

Wire that into your content and distribution systems. CMS templates, PR workflows, marketing briefs, and partner docs all pull from the same entity layer so that as you add lanes, you're extending one graph.

Where the Messiest Conflict Surfaces

The entity registry forces disagreements into the open in a way the organization has mostly been able to paper over until now.

The messiest conflict usually shows up between product marketing and sales on what the product actually is and who it's for. Then localization and regions pour gasoline on that fire.

Product marketing has a clean positioning and value prop stack. Sales has a deal-tested version with different language, sharper claims, and often a different sense of who the real ICP is.

The registry asks: which one becomes canonical?

When you lock canonical entities, you're implicitly choosing whose version of reality gets written into the backbone that AI systems will see and amplify. Sales often feels constrained. PMM feels second-guessed.

The deeper conflict is about incentives. Sales is rewarded for closing this quarter, even if that means stretching claims or reframing the product. PMM is rewarded for coherent positioning. The entity registry exposes and constrains those trade-offs.

How to Wire Incentives So Teams Can't Win Unless the Graph Wins

You don't start by asking teams to believe in the model. You wire their existing success into it so that they can't win unless it wins.

Make the registry the path of least resistance. Put the entity model inside the tools and workflows teams already use: Salesforce objects, CPQ, content briefs, PR templates, localization kits. If a field, deck, or release doesn't pull from canonical entities, it doesn't get ops or legal support.

Give sales and regions better enablement from the registry. Cleaner talk tracks, objection handling, localized proof points, all mapped to entities. If using the registry makes closing deals and shipping campaigns easier, it feels like an accelerant.

Introduce light governance before changing quotas. Create a simple rule: net-new claims, new product nicknames, or major ICP shifts must go through an entity review staffed by PMM, sales, and regions together. That makes the registry the place where their realities are reconciled.

Tie early, low-risk metrics to shared accountability. Before you touch quota, give the triad a shared KPI on a narrow lane: achieve X% AI share-of-voice and Y% increase in win rate for Product A in Segment B, using the canonical entity definition. Report results as a team outcome.

After one or two lanes show improved AI visibility and cleaner opportunity quality, you can safely add explicit constraints. Quota credit and SPIFs tied to opportunities that map to canonical entities. Marketing performance goals tied to authority and AI share-of-voice for those same entities.

The Honest Assessment for Late Movers

Organizations that haven't started yet are already behind. The gap is compounding.

In most B2B categories, early movers have bought themselves 12-24 months of structural advantage in how AI systems see and recommend them. That edge hardens every week they keep feeding their authority graph while you don't.

Analysts project that a meaningful chunk of global queries, often 20-25%+, will be AI-driven by 2026. AI answers are already siphoning a significant share of high-intent discovery.

Answer sets are sparse and winner-take-most. Studies show that in many B2B niches, a handful of brands capture the vast majority of top AI responses. Being outside that set means being functionally invisible at that moment.

AI systems reinforce patterns. Brands that are frequently recommended now are more likely to be recommended in the future because their names, entities, and proofs keep showing up as safe defaults.

AI referrals convert better. AI-sourced traffic shows materially higher intent and conversion than traditional organic. Multiple studies report significantly better engagement and lower CAC for AI-referred visitors. Early movers aren't just more visible. They're buying cheaper, better pipeline.

There is a realistic path to catch up, but it requires treating this as an infrastructure catch-up.

Narrow your focus. You don't try to fix AI for everything. You aggressively prioritize 1-2 revenue-critical lanes where you can still get into the answer set and win meaningful share-of-voice within 60-90 days.

Infrastructure first, tactics second. You stand up the entity registry and governance, wire your best content and proofs into that model, and only then scale reviews, PR, and content distribution. Otherwise you'll just create more inconsistent noise that AI can't resolve.

Measure what actually matters. You make AI share-of-voice, citation rate, and AI-referred pipeline visible at the board level, alongside CAC and win rate, so the org has political cover to reallocate budget from legacy channels that are quietly decaying.

If you wait another 18-24 months, in many categories you will have effectively ceded the AI layer to whoever is investing now. The same way brands that ignored paid search in 2004 or social in 2012 ended up paying a permanent tax to catch up.

But in early 2026, there is still a window. AI answer sets are concentrated, yet overall brand preparedness is low. A minority of companies say their content and data are truly AI-ready.

If you're willing to treat authority as infrastructure, centralize the entity model, rewire incentives, and focus ruthlessly on a few high-value lanes, you haven't ceded the category yet.

You've ceded the luxury of moving slowly.

The Fundamental Assumption That's Breaking

The assumption that "growth is something marketing does to the market through campaigns" is about to break.

By 2027-2028, growth will look much more like being selected by networks of human and machine agents that continuously audit your proof of competence.

In that world, you don't win because you shouted the loudest. You win because, in the data, you are the least risky, most consistently verifiable node in the graph for a given problem.

The center of gravity shifts from "How many plays are we running?" to "Where, in the machine and human graph, are we failing an implicit trust or fit test?"

Your growth conversations start with gaps in entities, proofs, integrations, and references. Budget debates aren't brand versus demand. They're infrastructure versus amplification: do we invest another dollar in ads, or do we invest it in making ourselves more machine-legible and less risky to recommend?

Authority is shifting from span-of-control over headcount to span-of-control over systems.

If you grew up in a world where great campaigns and great salespeople could brute-force growth, that's the unrecognizable part. You're moving into an environment where the bottleneck is no longer your ability to tell a story, but your ability to be provably true in a universe of agents whose only real job is to say no to anything that isn't.

Comments

Post a Comment