Why Your AI Pilots Keep Falling Short

Your marketing team has the tools. You ran the pilots. You saw the demos.

And nothing stuck.

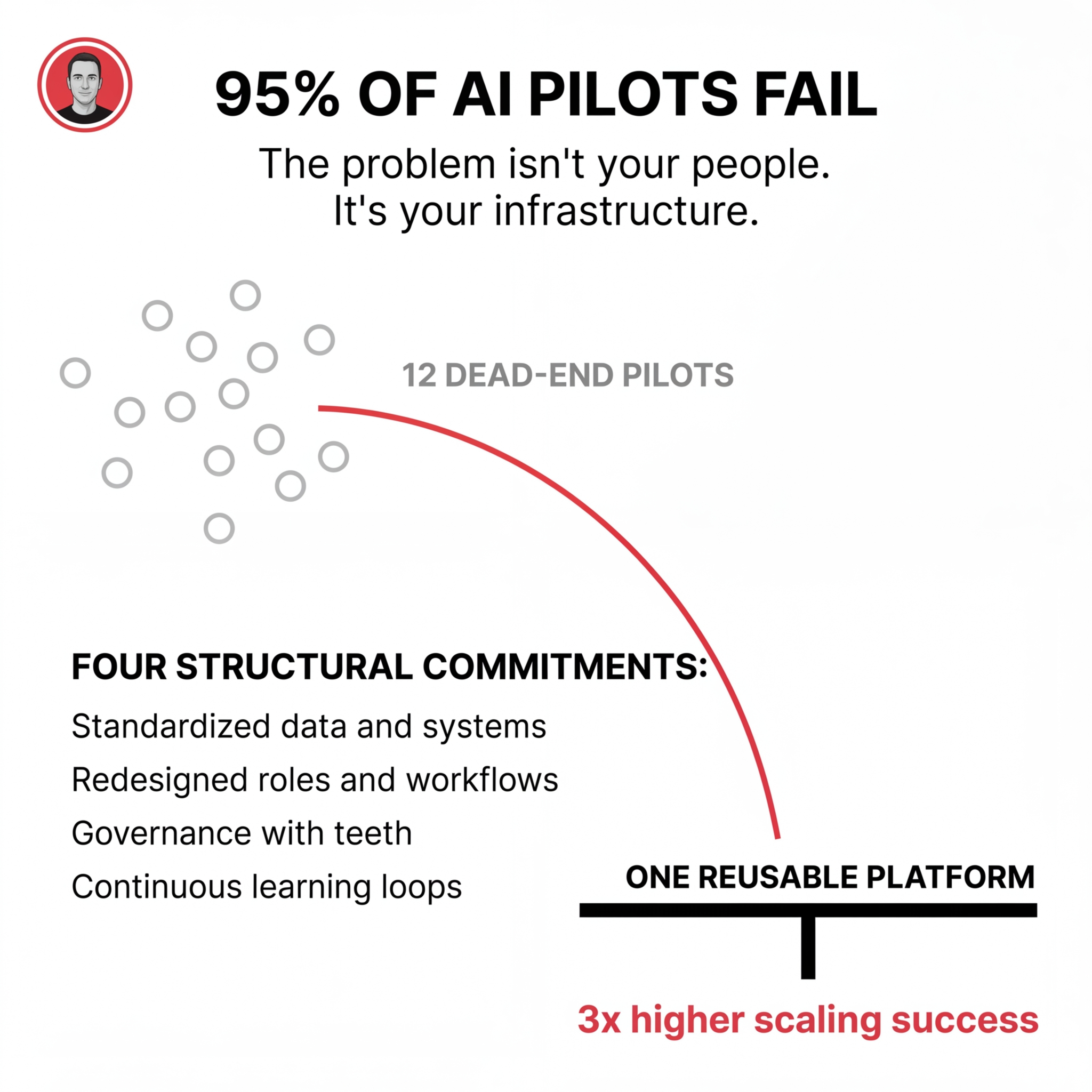

The pattern shows up everywhere. 95% of generative AI pilots fail to achieve rapid revenue acceleration. 88% of AI proofs-of-concept never reach production. Organizations that abandoned AI initiatives jumped from 17% to 42% year-over-year.

The problem isn't your people. It isn't the technology.

It's that you're treating AI as software to learn instead of systems to engineer.

The Real Gap Isn't Skills

When marketing teams struggle with AI adoption, the surface symptoms look like capability problems. Skills gaps. Training needs. Resistance to change.

Look deeper.

The actual problem is structural. Most teams don't have a defined AI strategy, clear use cases, or success criteria. Tools get bought on hype, bolted onto existing workflows, and left to a few power users without shared standards.

Without a spine connecting AI to how your business creates revenue, outputs feel random and risky. That reinforces fear, erodes trust, and makes resistance look rational.

Training upgrades individual skills. But training alone doesn't change incentives, workflows, data access, or decision rights. Usage spikes briefly after workshops, then regresses to old habits.

You need infrastructure.

What Infrastructure Actually Means

Infrastructure isn't a platform you buy. It's how you rewire your company so AI becomes the default way work gets done.

That requires four structural commitments:

Standardized data and systems

Clear ownership of core domains. Quality rules and contracts so AI can reliably pull from a single source of truth. A retrieval layer that every team plugs into instead of each team bolting ChatGPT onto their own spreadsheets.

Redesigned roles and workflows

Job definitions and SOPs where AI steps are explicitly embedded. The default process assumes a human-machine team. Career paths and handoffs reflect that some activities are now machine-led.

Governance with teeth

Clear policies about where AI is required, permitted, or prohibited. Auditability and compliance baked into the tools themselves. Performance management that rewards AI-powered outcomes so people are paid to change.

Continuous learning loops

Feedback captured at the system level so models and workflows improve over time. AI treated like a product with releases, retros, metrics, and backlog.

Organizations that build this backbone from day one achieve 3x higher scaling success than those who prototype first and harden later.

The Pilot Trap

Here's what happens when you skip the backbone.

Every pilot rebuilds plumbing. Each team stands up its own data pipelines, integrations, security reviews, and governance in miniature. You pay the foundation tax over and over again.

Most pilots never scale. They stall before production or meaningful ROI, leaving behind duplicated infrastructure, fragmented data, and compliance risk.

The real choice isn't small pilots versus big bets. It's 12 dead-end proofs of concept versus one reusable platform that can power 12 production use cases.

With shared infrastructure and guardrails in place, new AI use cases spin up in weeks instead of months. Data access, compliance patterns, and deployment paths already exist. A platform approach amortizes infrastructure, security, and operations across all use cases instead of funding them separately in each pilot.

Twelve months later, backbone-first organizations have a repeatable machine that turns ideas into production workflows at marginal cost. Pilot-stuck organizations have stories and screenshots.

What This Looks Like for Marketing

Marketing teams face a specific version of this problem. You're over-built for campaigns but lack infrastructure thinking.

You have dashboards that don't match. Three different numbers for the same metric. When an exec asks a simple question, you pull data from multiple sources and hope they align.

You optimize for visibility instead of systematic value creation. Blog posts and campaigns that live in isolation. Content chaos instead of reusable assets.

AI exposes whether your marketing was optimized for performance theater or actual infrastructure.

The shift requires moving from campaign-first to backbone-first. That means:

A unified data foundation where customer, product, and engagement data lives in one governed environment

Clear ownership across functions with decision rights over tools, data access, and risk

Workflows redesigned so AI is in the critical path for content, targeting, and measurement

Success metrics tied to infrastructure health, not feature adoption

When you build this way, AI becomes the system that makes your brand the trusted answer inside platforms like ChatGPT, Perplexity, and Google SGE. Authority compounds. Discoverability stabilizes across channels.

The Question That Matters

The hard part of AI isn't the models. It's whether you're willing to rebuild how your company works so turning ideas into production workflows becomes routine.

If you're not ready to treat AI as an operating model decision, all the training and tools in the world will just give you more sophisticated ways to stay exactly the same.

The core leadership question isn't "What can this model do?" It's "What kind of company are we willing to become so this technology actually compounds?"

That's the difference between organizations that build durable AI capability and those stuck in pilot purgatory.

Comments

Post a Comment