Your Subjective Opinions Are Killing AI's Objective Power

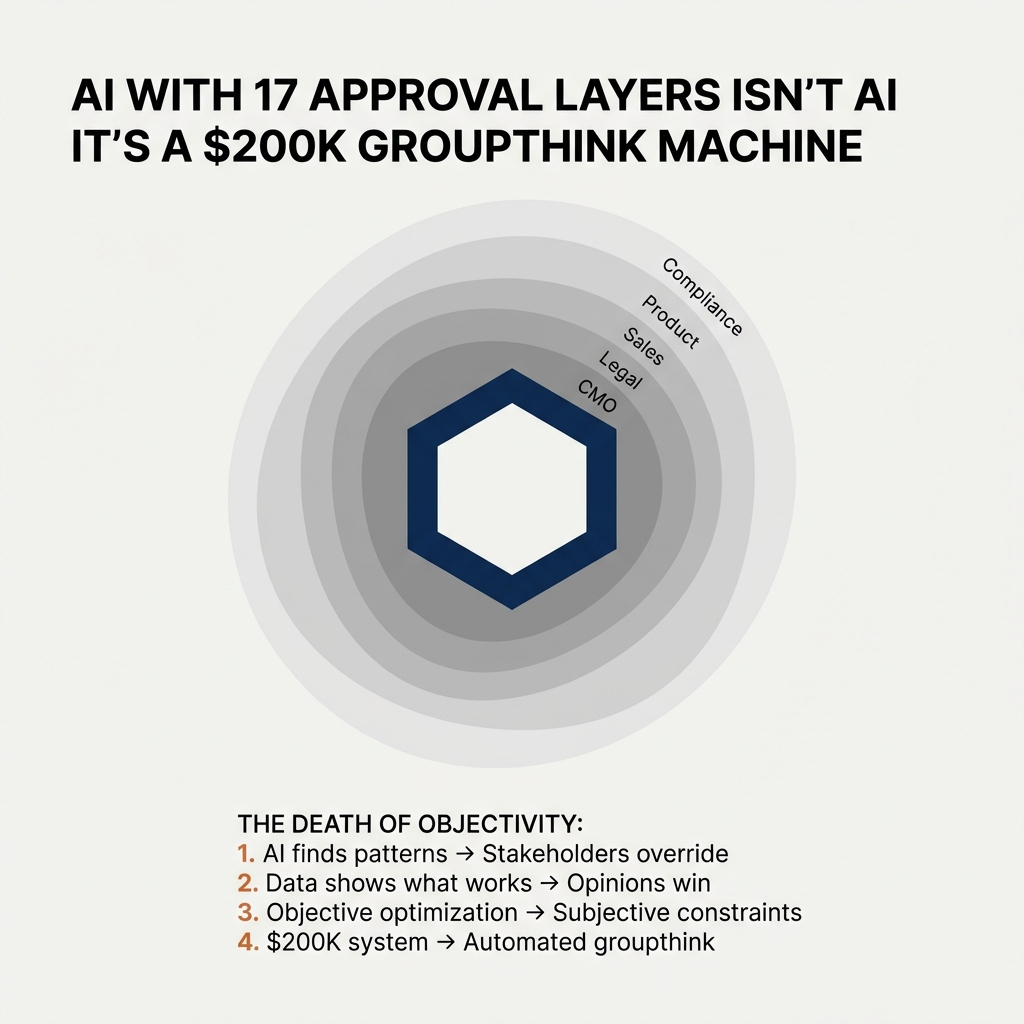

A VP of Marketing showed me their AI-powered content engine. It had seventeen approval checkpoints.

At every stage, someone had inserted their opinion about what the AI should do: the CMO's preferences on tone, the compliance team's rules about language, the product team's requirements for messaging, legal's restrictions on claims, brand's guidelines on voice, sales' insistence on certain phrases.

What started as an AI system that could analyze millions of data points to identify what actually drives conversions had been reduced to an automated enforcement tool for subjective human preferences.

The AI wasn't learning from outcomes anymore. It was learning to satisfy internal stakeholders.

When I asked what metrics improved since launch, the answer was telling: "Everyone's much happier with the content now." Not conversion rates. Not pipeline. Not engagement. Happiness with the content.

They'd built a $200K system to automate groupthink.

Here's the problem most companies won't admit: they're terrified of letting AI be objective.

AI's entire value is that it can find patterns humans miss, make decisions without ego or politics, and optimize for outcomes instead of opinions. But the moment it produces something that contradicts what the CMO "thinks works" or challenges how sales "has always done it," someone rewrites the constraints.

The result: you get all the cost and complexity of AI, with none of its objectivity. You're not building an AI-powered inbound engine. You're building an expensive way to enforce subjective opinions at scale.

And that's exactly where most B2B marketing teams are stuck: layering so many subjective constraints onto AI that they've neutralized the one thing that makes it valuable.

The Subjective Inputs Destroying Objective AI

Here's what AI is designed to do in an inbound engine:

Identify patterns objectively - Find what actually drives conversions, not what you think drives conversions

Optimize for measurable outcomes - Maximize pipeline, reduce CAC, improve conversion rates

Test without bias - Run variations without ego attached to which one wins

Learn from real behavior - Adjust based on what prospects actually do, not what stakeholders prefer

Scale decisions objectively - Make the same data-driven choice a million times without fatigue or politics

Here's what happens when you inject subjective human opinions:

"We don't like that tone" - Overriding AI recommendations because it doesn't match someone's stylistic preference, even when the data shows it performs better

"That's not how we talk about this" - Forcing AI to use approved corporate language instead of what resonates with prospects

"Legal needs to review every output" - Making humans approve every AI decision, which means the AI never learns what actually works in the wild

"Sales wants these keywords included" - Inserting requirements based on internal politics, not customer behavior

"The exec team prefers this format" - Constraining the AI to produce what makes internal stakeholders comfortable, not what drives results

Every one of these inputs feels reasonable in the moment. But collectively, they transform AI from an objective optimization engine into a subjective preference enforcer.

You're not getting AI's intelligence. You're getting automation of your biases.

The Real Problem: Everyone Thinks They Know Better Than the Data

76.6% of marketing organizations now have formal AI policies. But here's what those policies actually do: they codify subjective human opinions as constraints on objective AI systems.

Meanwhile, 71.6% of those same organizations admit they have no ROI targets for AI.

Think about that: three-quarters of companies have policies dictating what AI can and can't do, but almost none of them have defined what success looks like.

They've optimized for control over outcomes. For comfort over performance. For making stakeholders feel heard over letting the data speak.

The result is AI systems that are objectively capable of finding the best path to conversions but are subjectively constrained to only explore paths that "feel right" to people who've never run a multivariate test in their lives.

How Subjective Opinions Infiltrate Objective AI

The death of AI objectivity happens in predictable stages:

Stage 1: AI identifies an objective pattern

"Prospects who engage with Case Study A convert 3x better than those who see Case Study B. The model recommends prioritizing A in all nurture flows."

Stage 2: Someone's opinion enters the room

"But Case Study B is our CMO's favorite. And it features our biggest logo. And sales loves talking about it. We can't deprioritize it."

Stage 3: The constraint gets encoded

"Update the model: Case Study B must appear in at least 40% of all experiences, regardless of predicted performance."

Stage 4: The AI optimizes within the subjective constraint

Now the AI isn't finding the best path to conversion. It's finding the best path to conversion that includes the CMO's favorite case study 40% of the time.

Stage 5: Performance suffers, but no one connects it to the constraint

Conversion rates are lower than projected. The team blames the AI model, the data quality, or the channel mix. Nobody blames the subjective override that prevented the AI from doing what it was designed to do.

Stage 6: More opinions get layered in

"Maybe the AI needs more input from product marketing. Let's add their messaging requirements to the model constraints."

This is how you end up with an "AI-powered" inbound engine that's really just an expensive way to enforce committee-designed experiences.

The AI isn't making decisions anymore. It's executing a consensus.

Why Everyone's Terrified of Objective AI

The real reason companies layer subjective constraints onto AI isn't about risk management or compliance. It's about control.

Truly objective AI is threatening because it doesn't care about:

The CMO's favorite messaging

The sales team's preferred positioning

The founder's opinions about the market

Years of "best practices" that were never actually tested

Internal hierarchies and who gets a say

AI just looks at the data and says: "This works. That doesn't. Here's what you should do."

And that's terrifying for organizations built on consensus, committees, and HIPPOs (Highest Paid Person's Opinion).

What happens when the AI recommends deprioritizing the product the CEO loves? Or suggests messaging that contradicts years of brand positioning? Or identifies that your most expensive content assets are your worst performers?

Suddenly you're not just deploying AI. You're challenging power structures, questioning sunk costs, and forcing people to choose between what they believe and what the data shows.

So instead of letting AI be objective, companies add guardrails: "You can optimize, but only within these approved parameters." "You can test, but these elements are non-negotiable." "You can recommend, but humans will review and adjust."

Every guardrail is a subjective opinion disguised as risk management. And every opinion reduces AI from an objective optimization engine to a constrained automation tool.

You end up with the worst of both worlds: all the complexity and cost of AI, none of the objectivity that makes it valuable.

When You Can't Measure What AI Actually Does

Here's the impossible position marketers are in: you're being asked to prove ROI for an AI system that's not allowed to operate objectively.

The AI identifies that shorter subject lines convert better, but brand insists on full product names. The AI recommends prioritizing behavioral data, but legal restricts it to demographic fields. The AI suggests real-time personalization, but governance requires batch processing and human review.

So what are you actually measuring? Not AI performance. You're measuring how well a constrained, opinion-laden system performs compared to... what, exactly?

You can't say "AI increased conversions by 20%" when the AI was forced to use the CMO's preferred tone, include sales' mandatory keywords, avoid legal's restricted data, and operate within brand's approved formats.

What actually drove the result: the AI's objective intelligence, or the fact that you finally got everyone to agree on something?

This is why 75% of marketers admit their attribution systems underperform. They're trying to measure the impact of AI while simultaneously preventing AI from operating the way it's designed to.

So marketers do the safe thing: they talk about "AI-assisted workflows" and "productivity gains" and "capability building." Vague benefits that don't require proving causation.

Because if you actually tried to measure whether AI works, you'd have to admit that you've built a system where AI can't work at least not objectively.

Objective Governance vs. Subjective Control

Not all governance kills objectivity. The question is: are your policies protecting outcomes, or protecting opinions?

Here's the difference:

Objective governance defines the boundaries, not the path. "AI must protect customer privacy and comply with data regulations" is a boundary. "AI must use these specific phrases when talking about our product" is telling AI which path to take.

Subjective control dictates the creative. When policies require specific messaging, approved formats, mandatory keywords, or stakeholder review of outputs, you're not governing AI you're directing it.

Objective governance measures results objectively. Conversion rates, pipeline velocity, cost per acquisition. Metrics that don't care about anyone's opinion.

Subjective control measures stakeholder satisfaction. "Everyone's happier with the content now." "Legal is comfortable with the approach." "Sales likes the new messaging." None of which tell you if it's working in market.

Objective governance lets AI learn from outcomes. When something performs well, do more of it. When something underperforms, stop. The AI's training loop is tied to real market results.

Subjective control breaks the learning loop. When human review overrides AI recommendations based on preference rather than performance, the AI can't learn what actually works. It learns what humans approve.

Objective governance asks: "Did this work?"

Subjective control asks: "Do we like this?"

If you can't tell the difference between those two questions, your AI will never be more than an expensive automation tool.

How to Let AI Be Objective (Without Losing Control)

The goal isn't to remove all human judgment. It's to separate objective constraints from subjective preferences.

Define outcome metrics, not creative requirements. Tell AI "increase SQL conversion by 15%" not "use these three messaging pillars in every touchpoint." Let the AI find the path.

Set boundaries based on risk, not style. "Don't use PII without consent" is a boundary. "Always include the founder's quote" is a style preference disguised as a requirement.

Build test-and-learn cycles that override opinions. When the data shows something works, it ships regardless of who likes it. When the data shows something fails, it stops regardless of who championed it.

Let AI report objective performance, not subjective compliance. Your AI dashboard should show what's converting, what's not, and what the model recommends doing differently. Not "how many stakeholders approved this."

Accept that AI will find uncomfortable truths. Your most expensive content might underperform. Your CEO's favorite positioning might not resonate. The messaging you've used for years might be hurting conversions. If you're not willing to act on those findings, don't deploy AI.

The marketers building real AI-powered inbound engines aren't layering subjective opinions onto objective systems. They're setting clear outcome targets, defining objective boundaries, and letting AI optimize within them.

They're comfortable with AI making decisions they might not have made, because the AI has access to data they don't and isn't constrained by politics they are.

The One Question That Cuts Through the Noise

Before your next AI initiative, ask this: "What needs to be measurably different in 90 days?"

Not "What AI tools will we implement?" Not "What policies need approval?" Not "What's our AI transformation roadmap?"

Just: What specific business metric needs to move, by how much, in the next 90 days?

If the answer is "we'll increase qualified pipeline by 15%" or "we'll reduce cost per SQL by 25%" or "we'll cut content production time in half"—you have something you can build toward, test, and measure.

If the answer is "we'll build AI capabilities" or "we'll learn about AI" or "we'll establish responsible AI practices"—you're headed for theater.

Ninety days is long enough to build something real and short enough that you can't hide behind process. You pick one focused use case, integrate just enough to test it in production with real users, and measure whether it moves the number you said it would.

At the end of 90 days, you make a decision: scale it, refine it, or kill it. Then you run another 90-day cycle.

That cadence forces clarity. It prevents endless pilots who never graduate. It keeps AI tied to real business outcomes instead of innovation theater.

And if your governance framework can't accommodate a 90-day experiment with clear outcomes and reasonable guardrails, that's your answer. You don't have a governance problem. You have a governance theater problem.

Objectivity Is the Infrastructure

At Authority Engine, we see this play out in a specific way: brands trying to build AI-powered inbound engines on subjective, opinion-driven foundations.

The problem isn't just that AI policies are restrictive. It's that the underlying infrastructure most brands operate on is inherently subjective. Entities aren't verified. Metadata is opinion-based. Trust signals are what someone decided they should be, not what data shows they are.

So even if you let AI operate objectively, it's optimizing on top of a subjective foundation. The AI can't find objective truth when the data it's trained on reflects human biases, not market reality.

That's why we focus on authority infrastructure: objective, verifiable signals that both your AI and external AI systems can trust. Entities that are confirmed, not assumed. Data that's synchronized across sources. Trust signals engineered from measurable outcomes, not stakeholder opinions.

When your foundation is objective, AI can be objective. You can set clear outcome targets (visibility, qualified demand, pipeline) and let AI optimize toward them without layers of subjective override.

The subjective approach: immaculate policies, exhaustive approvals, consensus-driven outputs, and mediocre results.

The objective approach: clear boundaries, measurable outcomes, data-driven decisions, and AI that actually works.

Your competitors aren't debating tone and messaging in committee rooms. They're letting AI find what works, test it objectively, and scale it fast.

The question isn't whether you trust AI. It's whether you trust data more than opinions.

Comments

Post a Comment