The Moment AI Stops Being a Project and Becomes How You Run

There's a specific moment when AI transitions from engineering experiment to operating infrastructure.

It happens when a functional leader starts owning the KPIs instead of asking the tech team for updates.

I've watched this inflection point play out across dozens of organizations. The pattern is consistent. The technology works. The models perform. The integrations are live.

Then nothing changes.

The workflow never becomes mandatory. Usage stays optional. Nobody's number depends on it.

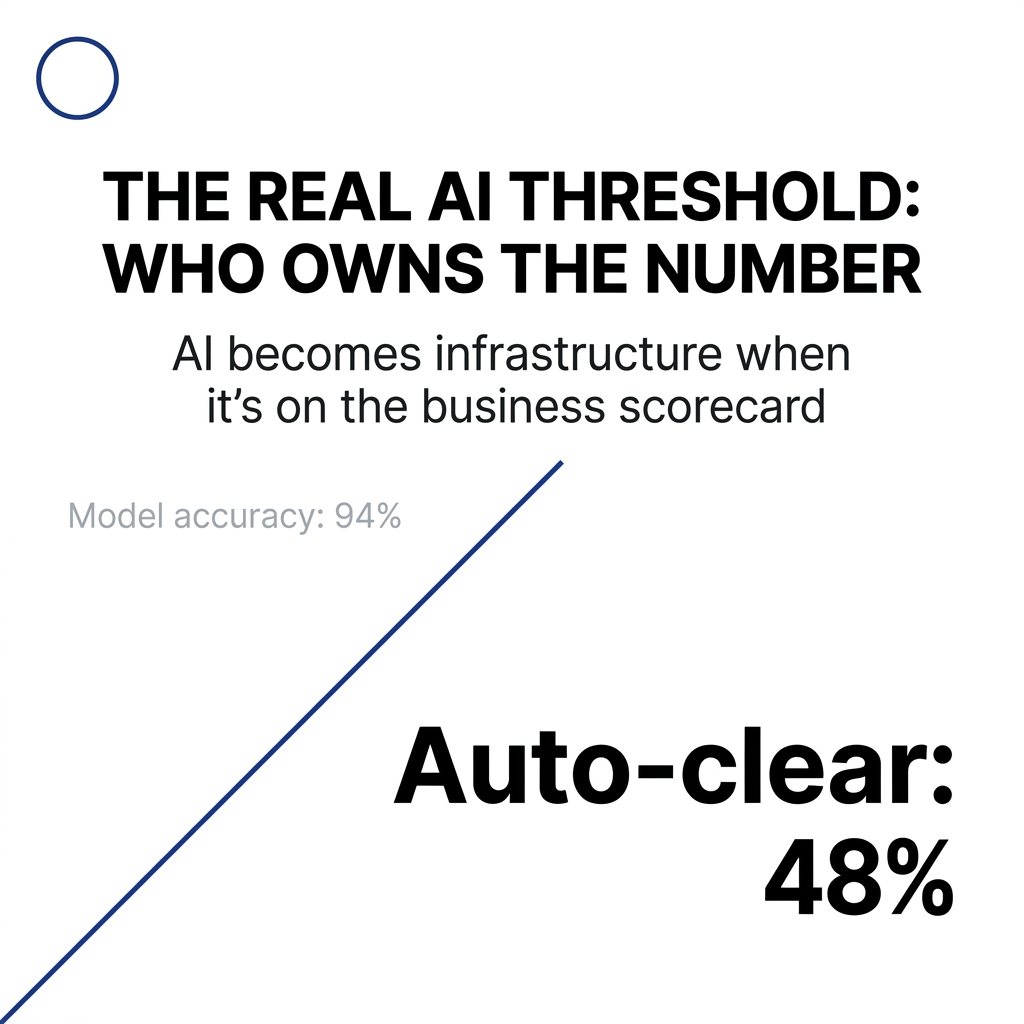

The real threshold has nothing to do with model accuracy or data quality. It's about who owns the decision once AI is in the loop.

When the COO Stops Asking "How's the Model?"

I saw this shift clearly in a quarterly business review for a claims operation.

For two quarters, the CIO presented model precision metrics and GPU spend. The COO and CFO nodded politely, then moved on to "real" numbers like loss ratios and cycle times.

The third review opened differently.

The COO spoke first: "We committed to taking average claim resolution from 9 days to 5. We're at 5.6. The claims engine is auto-clearing 48% of low-risk cases. I want us at 60% by year-end."

Nobody asked for a model performance slide.

The CFO jumped in: "That 48% is worth about 11 FTEs of capacity. If we get to 60%, we can absorb next year's volume growth without adding headcount. What do you need from policy, training, or product to safely push that number up?"

Three things changed in that moment.

First, AI KPIs became business KPIs. Auto-clear percentage wasn't a fun fact from the data team. It was a lever tied directly to resolution time, staffing plans, and unit economics.

Second, questions shifted from validation to optimization. Instead of "Is the AI accurate enough?" they asked "Which claim types are still too risky to auto-clear?" and "Where are humans overriding the engine, and why?"

Third, ownership became explicit. The COO closed with: "I'm taking the 60% auto-clear rate as an ops target for next year. IT and data are partners, but this sits on my scorecard."

From that point forward, AI metrics showed up on the COO's and CFO's dashboards. The data team still watched model quality and drift, but the headline KPIs belonged to the business.

That's what the handoff looks like. Executives stop asking for AI status updates and start arguing over AI-driven metrics as part of running the company.

The Four Signals That AI Has Crossed the Line

After watching this pattern repeat, I look for four specific signals. If I see these, I stop calling it an experiment and start treating it as infrastructure.

Signal 1: There is a named workflow, a KPI, and an owner

The workflow has a name in the operating model. Claims engine. Contract engine. Refund rail.

A business leader has that AI-driven KPI on their scorecard. Performance reviews and quarterly business reviews talk about that KPI without caveats like "it's just a pilot."

When I hear "Jane owns time-to-first-response and auto-resolution rate," I know we've crossed a threshold.

Signal 2: The AI layer is the default path

People don't "go to the AI" anymore. The work flows through it by default.

If you turned the AI off tomorrow, core work would stall and you'd declare a severity-1 incident. SOPs and playbooks assume the AI layer is in the loop.

The litmus test: frontline staff complain when it's down, not when it's up.

Signal 3: Rules and authority live in a shared layer

They've externalized decision logic into something the business can see, change, and govern.

There is a central place where you can inspect who can auto-approve what, under which conditions. Changing how the process works means updating rules or workflows in that layer.

Risk and compliance talk about guardrails and thresholds in that shared layer instead of manually reviewing every AI output.

Signal 4: Leadership talks in outcomes, not model stats

Executives don't ask "How's the model doing?" They ask "How do we move auto-clear from 50% to 65% without increasing error rates?"

AI shows up in board decks as drivers of cycle time, cost, capacity, or revenue. The COO and CFO reference AI-driven metrics in the same breath as utilization, margin, or NPS.

When they plan headcount or capacity, they assume that AI-orchestrated workflow is there and reliable.

When I see all four signals, I stop treating it as "AI work" at all. At that point, it's just how the company runs.

Why the Budget Line Matters More Than the Technology

The budget line is how the company encodes who is allowed to care and who is forced to trade off.

When AI spend lives on IT's budget, it's evaluated like tooling. Success is framed in technical terms: uptime, usage, theoretical cost savings.

When it moves to a functional leader's P&L, the conversation changes completely.

The Head of Claims is literally deciding: "Do I fund more adjusters, or do I put that money into the claims engine to handle volume?"

The Head of CX is weighing: "Do I open another support region, or do I push auto-resolution from 40% to 60%?"

Those trade-offs force clarity about what AI is for in that domain. It's a concrete alternative to traditional spend.

Roadmaps shift from feature-driven to operating commitments. "We will take average resolution from 9 to 5 days." "We will absorb 20% more volume with flat headcount." "We will cut write-offs by 15%."

Leaders now kill or accelerate AI work based on P&L impact.

Accountability for failure gets real. If an AI-driven workflow doesn't deliver, the owner still has to hit their number. They can't hide behind "the pilot didn't pan out."

That pressure changes behavior. They get serious about change management, process redesign, and governance because they pay the price if the workflow doesn't land.

Once a functional leader is paying for it, they will do the hard work to make sure it isn't just a science project.

The Language Shift That Reveals the Transition

You can hear the ownership shift in how people describe their work.

Early on, a B2B services client talked about their contract workflow like this: "We're using AI to help legal review contracts faster." "The AI model is getting better at spotting risky clauses."

They still saw AI as an add-on that helps people do the old process.

Four months after go-live, the COO opened a review meeting with: "Here's how our contract engine works now: Standard deals go straight into the contract engine, it classifies the agreement, applies our playbook, and either clears it or routes it to legal with the risks highlighted."

Nobody said "AI" for the first 20 minutes.

They said things like: "If we tweak the thresholds in the engine, we can move another 10% of deals into auto-approval." "The engine surfaced that half our exceptions are actually outdated rules we never updated." "Sales needs to learn how to feed the engine good inputs."

Three things became clear from that language shift.

AI had become invisible infrastructure. They talked about the system of work, not the technology. The AI was now plumbing.

Ownership moved from IT to the business. Legal and sales were arguing about rules and thresholds. The COO was asking "What policy change gets us the next 2 days out of the cycle?"

They understood the lever was the authority layer. When they wanted better outcomes, their first instinct was "Update the playbook in the engine, adjust the routes, refine the exception criteria."

The exact moment I knew they'd crossed the line was when the Head of Legal said: "If we ever replace our CLM, fine. But don't touch the contract engine. That's how we run approvals now."

At that point, they weren't "using AI." They had a governed contract infrastructure that happened to be AI-powered, and everyone in leadership spoke about it that way.

When Governance Becomes the Fast Lane

The best example of governance making "yes" faster was in a bank's marketing and product org.

They wanted AI-generated campaigns and offers, but every idea was dying in review hell. Legal, risk, compliance, brand, all in series.

They built governance into the rails instead of into meetings.

They created three risk tiers with pre-agreed rules. Low-risk uses like internal productivity and draft copy were pre-approved. Medium-risk uses like customer-facing content within standard policies got pattern-based checks and automated guardrails. High-risk uses that touched pricing fairness or regulated promises went through formal review.

They turned key rules into automated checks in the tooling. Forbidden phrases, required disclosures, targeting constraints, and data-use limits were enforced at runtime.

They created a paved path for teams who stayed within guardrails. If a squad used the approved models, data sources, and templates, they could ship without going back to the central committee each time.

Before this, a PM would say "We want to use AI to personalize offers," and the answer was "Come to the next governance council."

Afterward, the conversation sounded like: "This is a medium-risk use. We're using the bank-approved model, only on Tier-2 customer segments, with the standard disclosure block. We've passed the automated checks." Risk would respond: "If you stay on the paved path, you're green-lit. Just register the use in the catalog."

"Yes" got faster because the criteria for yes were explicit and encoded. Teams knew in advance how to design something approvable. Reviews focused on true edge cases. Execs gained confidence from traceability and logs.

The signal that governance was working: new AI-powered campaigns went from one per quarter with three committees to multiple per sprint on the paved path. The risk team still slept at night.

Governance stopped being the speed bump and became the fast lane, as long as you stayed inside the guardrails.

The Biggest Blind Spot Leaders Miss

Most leaders still treat AI governance as controlling a tool instead of owning a decision.

Until that flips, everything else is theater.

Most governance decks talk about models, prompts, vendors, and data usage. Important, but the real risk and value live in the decisions those systems influence.

The blind spot: no one has written down "For this workflow, once AI is in the loop, who owns the decision and its consequences?"

Escalation paths are fuzzy. Override rules are implicit. When something goes wrong, everyone can plausibly say "That wasn't really my call."

Leaders realize this too late, usually after the first serious incident, when they discover they have model cards and policy PDFs but no clear answer to "Who was actually accountable for this outcome?"

A lot of organizations just take their existing IT governance, replace "application" with "AI," and call it a framework.

The blind spot: traditional IT governance assumes systems are relatively static and deterministic. AI systems are probabilistic, adaptive, and increasingly agentic. They initiate actions.

You can't bolt that onto old structures and expect it to work. You need an AI operating model that explicitly says who owns strategy, data, risk, model quality, delivery, and adoption.

They only see the gap when AI starts making or recommending decisions across silos and their old committee structure can't keep up.

Leaders obsess over the models they train and the agents they deploy, but a growing share of their risk and exposure sits in third-party and shadow AI. Tools their teams adopted. SaaS features that quietly added AI. Vendor systems making decisions on their behalf.

The blind spot: no inventory of where external AI is already in critical flows. No governance over those external decision points, even though customers and regulators won't care whether it was "your model" or a vendor's.

By the time they notice, business units are deeply reliant on these tools, and unwinding or governing them is far harder.

Many leaders think they've done change management because they ran training on prompts and tools.

The blind spot: they haven't actually redesigned roles, processes, and incentives around AI-participating decisions. Managers still own the same KPIs. Staff are still rewarded for old behaviors. AI is bolted on instead of built in.

Most AI initiatives stall because no one redefined who owns what once AI enters the workflow.

If I had to put it in one line: Most leaders overestimate the risk of AI going rogue and underestimate the risk of nobody clearly owning what AI is allowed to decide, on whose behalf, and under which rules.

That's the blind spot that quietly kills more AI efforts than any technical limitation.

What Actually Happened When We Got It Wrong

The clearest miss I've seen was a customer support automation rollout where everything looked right on paper except for who actually owned it.

We designed an AI-orchestrated triage and response workflow for a SaaS company's support org. Technically, it worked. Tickets were auto-classified, common issues got suggested replies, and SLAs improved in the pilot group.

We put ownership in the wrong place. The project sat under the CIO and Head of Data, with "strong partnership" from Support.

In steering meetings, the CIO talked about model accuracy, latency, and integration status. The Head of Support rarely spoke first.

When we hit trade-offs like "Do we change the queue structure?" or "Do we adjust agent KPIs to reward using the engine?" everyone looked at Support, but Support didn't own the budget or the success criteria. It wasn't on their scorecard.

Frontline managers got a mixed message: "Use the new AI tools, but you're still measured on the old metrics."

Agents used the AI when it was convenient, then bypassed it under pressure. Support leaders quietly prioritized headcount and traditional tooling because that's what they were actually accountable for. The CIO's team owned something they couldn't force into the day-to-day.

The painful moment was a quarterly business review where the CIO reported good model metrics and partial adoption, and the COO asked the Head of Support: "Would you notice if we turned this off tomorrow?"

They hesitated, then said honestly: "It would be annoying for some agents, but it wouldn't change my staffing plan or my SLA commitments."

That was the diagnosis in one sentence. Wrong owner.

We reset the structure. Support took primary ownership. AI and IT became enabling partners. The Head of Support got explicit targets: percentage auto-resolution, time-to-first-response, and a linked staffing plan.

The budget line for the AI engine moved into the Support cost center. Now the Head of Support was choosing between "more people" and "more throughput via the engine" with their own dollars.

We changed KPIs so adoption and proper use of the AI workflow were part of performance. Managers got credit for higher auto-resolution rates.

Six months later, when we asked the same question, the Head of Support didn't hesitate: "Yes. My team would drown. This is our front line now."

The lesson: if the person whose number is on the line isn't the owner, the AI never becomes infrastructure. It stays a helpful tool someone else built.

The Inflection Point in Practice

The first AI workflow I saw that really became infrastructure wasn't glamorous. It was invoice coding and approval routing for a multi-entity services business.

They had already done all the cool experiments: copilots, dashboards, chatbots over documentation. None of those changed how the month-end actually ran.

The thing that quietly became infrastructure was a workflow that read incoming invoices, classified them against a canonical chart of accounts and cost centers, checked against contract and PO data, and routed them for approval under explicit authority rules.

Then it just kept doing that every single day.

What made it different from the experiments:

No one logged in "to use the AI." Invoices arrived, got processed, and showed up in the AP system with suggested codes and approvals. The AP team lived in their existing tool. The AI lived entirely in the plumbing.

The business KPI moved and stayed moved. Close time dropped. Exception rates were tracked. Leadership started planning around "we can close in X days" because the new workflow was reliable. They spoke in terms of cycle time and accuracy.

Ownership flipped to operations. When something went wrong, ops didn't file a ticket about the model. They said "We need to update the routing rule" or "We should add a check for this vendor type." They saw it as their process.

It was boring, and that was the point. After a few months, nobody asked "Is the AI still working?" any more than they'd ask if email is still working. If invoices stopped flowing, it would be a severity-1 incident. That's infrastructure.

Every flashy pilot they'd done before required someone to remember to go to the AI. This one reversed it. The work went through the AI layer by default.

The day you realize people forget it's there but they can't do their jobs if it's turned off, that's when you know a workflow has crossed from experiment to infrastructure.

What the Data Shows About This Transition

The patterns I've seen in client work match what's happening across the enterprise landscape.

Nearly three-quarters of CEOs say they are their organization's main decision maker on AI, twice the share from last year. Half of CEOs believe their job is on the line if AI does not pay off.

The stakes have fundamentally changed.

210% more organizations are now registering models for production use. This indicates that many companies focused on experimenting last year have crossed the threshold into operational AI systems.

Production deployment is where ownership accountability crystallizes.

Once an organization commits to exploring an AI solution, deals convert at nearly twice the rate of traditional software. 47% of AI deals go to production, compared to 25% for traditional SaaS. That elevated conversion reflects strong buyer commitment. High conversion signals executive ownership.

Accountability for performance on KPIs is increasingly insufficient. Companies need accountability for the performance of KPIs, too. 9 out of 10 managers who use AI to create new KPIs agree or strongly agree that their KPIs have been improved by AI.

When functional leaders start redefining success metrics with AI, they've crossed the ownership line.

As AI systems and increasingly agentic ones move closer to decision-making, the CFO's role becomes more demanding: anchoring strategy in economic reality while navigating greater uncertainty and autonomy. Those who succeed will be CFOs who deliberately architect decision-making, productivity, and governance systems.

The transition is shifting ownership from data science teams to infrastructure teams. As one Red Hat CTO explained: "Eventually it's going to be a CIO's problem if AI gets successful, they're going to be the ones managing it and scaling it and operating it."

Organizations have moved AI investment out of project budgets and into capital and operational infrastructure budgets. This is an accounting change that signals organizational intent. They have established dedicated AI governance functions with clear ownership, typically sitting at the intersection of technology, legal, risk and business leadership.

How to Recognize Your Own Inflection Point

You'll know you've crossed the inflection point when the language in your leadership meetings changes.

You stop hearing "We're using AI to help with X" and start hearing "Here's how our X engine works now."

You stop hearing "The AI model is getting better" and start hearing "We need to adjust the thresholds to move another 10% into auto-approval."

You stop hearing "How's the pilot going?" and start hearing "This sits on my scorecard."

The functional leader whose P&L depends on the outcome starts speaking first in the meeting. The CIO and data team become partners who enable, not owners who report.

When someone suggests turning off the AI layer, the business leader responds with urgency, not curiosity. They can't imagine running the operation without it.

The budget conversation shifts from "Can we afford this AI project?" to "If I put another $500k into this engine, can I avoid hiring 15 people next year?"

Your SOPs and playbooks assume the AI layer is in the loop. Your capacity planning assumes the AI-orchestrated workflow is there and reliable.

When something breaks, you declare a severity-1 incident and mobilize the team, just like you would for any critical system failure.

That's the inflection point. AI stops being something you're trying and becomes infrastructure that shapes how your systems of work run and how your P&L behaves.

The technology didn't change. The ownership did.

Comments

Post a Comment