Why Marketing Teams Keep Buying AI Tools That Never Get Used

Marketing leaders admit to wasting budget on AI that did not deliver. Some report losing 20 percent or more of their marketing budget on underperforming tools.

The pattern repeats: a promising demo, an enthusiastic purchase, and then silence. Six months later, the tool sits unused while teams revert to old workflows. The problem is not the technology.

The first mistake happens before the demo call.

They Bought the Idea, Not the Workflow

Marketing teams buy AI tools for the concept of AI, not for a clearly defined workflow.

The buying decision skips the hard question: "What exact process, owned by which team, will this replace or radically speed up?" Instead, teams jump to vendor demos and feature checklists. Without a concrete use case, a success metric, and a named business owner, the AI platform is implemented alongside existing workflows rather than integrated into them.

Research shows that martech utilization has dropped to as little as 33 percent. People revert to their old tools. The new spend turns into shelfware.

What Success Looks Like in 30 Days

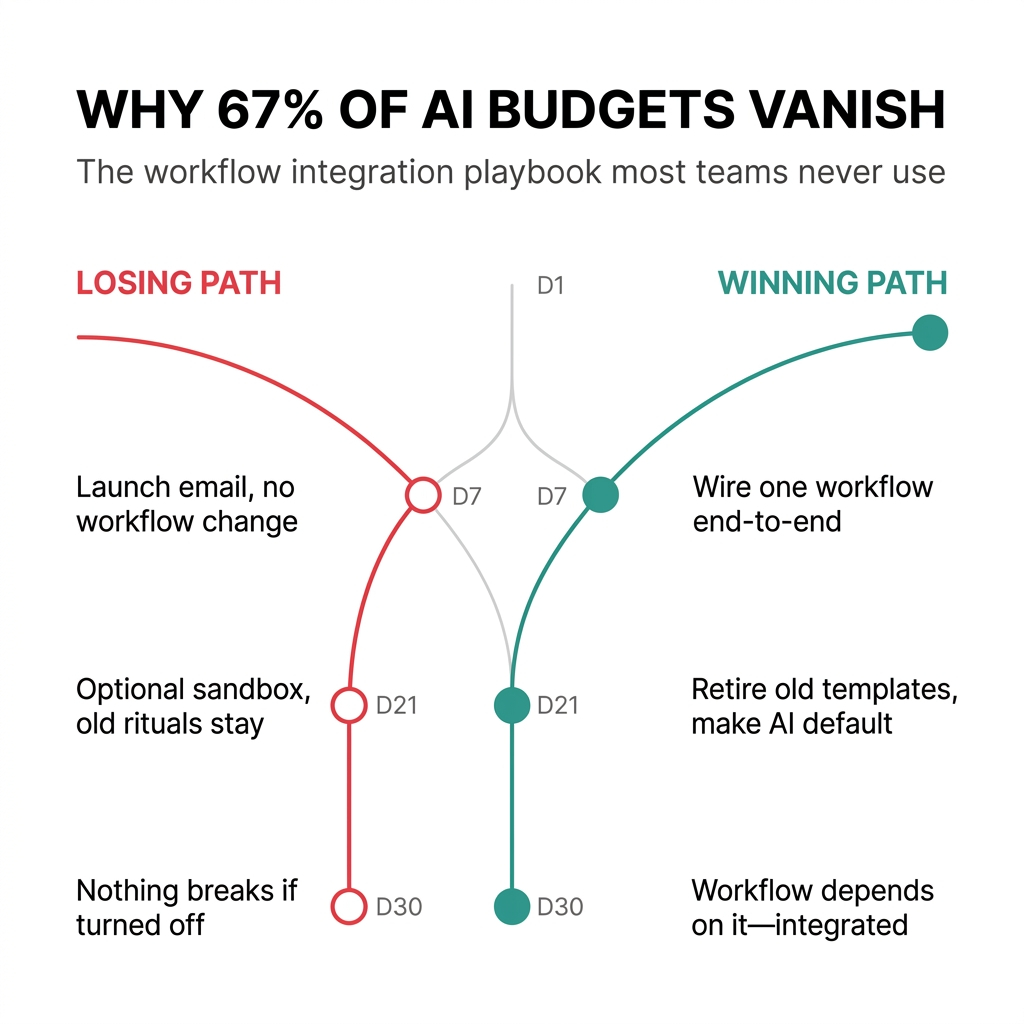

Teams that win make AI the new default step in one narrow workflow within 30 days. Teams that lose leave AI as an optional side tool.

Days 1-7: Pick a single thin slice workflow: weekly pipeline and campaign performance review. RevOps and Marketing Ops connect the AI tool to CRM, MAP, and ad accounts. They define one trigger: when a weekly report is generated, AI must pre-fill the commentary and recommended actions. The CMO announces: "Starting this week, the only deck we look at in the Monday meeting is the AI-prepared one."

Days 8-21: Run AI and humans in parallel. Ops builds the normal deck, AI builds its version, and the team compares insights and tightens prompts. By week three, they retire the old manual deck template. AI is not an extra tab. It is the reporting workflow.

Days 22-30: They measure simple outcomes: time to produce the weekly report, number of campaigns with explicit next actions, and pipeline influenced per iteration. Typical teams see a 40-60 percent reduction in prep time.

By day 30, if you cancel the AI tool, the Monday meeting literally breaks. That is when you know it is integrated.

What Failure Looks Like

Compare that to the teams burning 20 percent or more of the budget:

Days 1-7: They sign the contract, connect one or two systems, and send a launch email: "New AI assistant is live." There is no named workflow. No calendar event changes.

Days 8-21: A few enthusiasts test it on side projects. Core rituals still use the old decks and spreadsheets. No one updates SOPs. No meeting agendas change.

Days 22-30: Usage data show a small cluster of power users and a long tail of people who never logged in more than once. When budgets tighten, finance asks: "What would actually break if we turned this off?" The honest answer is "nothing."

The Conversation That Never Happens

Most marketing leaders blame "adoption challenges" or "change management." The real issue is a fundamental mismatch between how tools are bought versus how work actually gets done.

The missing conversation is ruthless, pre-demo alignment: what exact job are we hiring this AI tool to do, inside which existing workflow, owned by whom, and how will we know it is working in 90 days?

Before anyone books a vendor, answer in one sentence: "If this AI tool works, what painful process disappears or becomes dramatically faster?" If you cannot agree on that, you are not ready for a demo.

The Shift From Tools to Infrastructure

When a team does this right once, they stop thinking of AI as "tools to adopt" and start treating it as infrastructure for workflows they can repeatedly redesign for leverage.

Future AI conversations should start with "Which workflow are we rebuilding next?" rather than "What can this platform do?" Every new AI investment now needs a clear link to sales productivity, customer satisfaction, or cost reduction.

The internal narrative shifts from "AI is innovative" to "AI earns its place in our capital allocation model."

What This Means for Your Budget

The forcing function is external pressure on proof: a moment when the CMO is asked, "Show me where AI is creating business value, or it is coming out of the plan."

CFOs are asking exactly how AI spend translates into revenue, margin, or cost takeout. That changes the question from "How much are we spending on AI?" to "What happens to CAC, pipeline velocity, or content cost if we remove this AI layer?"

As growth slows, organizations are pushed to consolidate stacks around fewer, deeper platforms. That consolidation naturally favors AI embedded in core workflows over point solutions.

The Path Forward

Start where you can prove, in 30-60 days, that "if we turn this AI off, revenue motion slows down." Pick one of two fast proof workflows:

AI-driven performance reporting: Use an AI reporting layer to automatically pull channel data, generate weekly insights, and recommend budget reallocation. Teams that do this well often reduce acquisition costs by double digits.

AI-assisted content production: Use AI to generate first drafts and variations for ads, emails, and landing-page copy. This typically yields 2-10x more testable variants and 30-40 percent time savings.

Make three things non-negotiable:

AI generates the first version every time. The team edits. They do not start from scratch.

The existing manual templates are retired. There is no shadow workflow to fall back to.

Key rituals are explicitly run on the AI-generated artifact as the main source.

What Happens After the First Win

The trap is thinking "That worked, now let's sprinkle AI everywhere" instead of "That worked, now let's industrialize how we did it."

After the first win, many teams spin up a dozen new pilots, each with its own prompts and manual workarounds. None shares a common data foundation, integration pattern, or governance. Momentum dissolves into chaos.

Your next move is to formalize that win into a repeatable playbook: data, integration, SOP, governance, metrics. Assign an owner to apply that playbook to the next workflow.

The Compounding Advantage

When a marketing organization operates this way, with AI wired into a few core workflows and a repeatable system for adding more, they gain a compounding edge.

Their strategy, execution, and learning operate as one feedback loop. Data flows from campaigns into AI, AI surfaces insights and recommendations, and those get pushed back into planning every week.

AI automation takes over data pulls, segmentation, and reporting, freeing marketers to focus on creative strategy and high-value experimentation. They reinvest the saved cycles into more tests, more ideas, and deeper optimization. That widens the performance gap over time.

With AI embedded in core workflows, they move from backward-looking reports to predictive systems that forecast demand and recommend next best actions. They adjust budgets and creative ahead of market shifts, while competitors are still reacting to last quarter's numbers.

Over a few cycles, that adaptability becomes a moat that their tool-equal but workflow-poor competitors cannot match.

The One Thing Nobody Talks About

AI is not a set of tools you adopt. It is a way of committing your organization to change itself whenever the data shows there is a better way to work.

If you treat AI as software, you will chase features and demos. If you treat AI as the engine that constantly rewires critical workflows in response to real performance, you are signing up for permanent re-architecture of how your team plans, executes, and measures.

You are not really deciding which AI tools to buy. You are deciding how willing you are to redesign how your org actually does its work, over and over, as AI shows you a more efficient or more profitable pattern.

Comments

Post a Comment